Rapprentice: teaching robots by example¶

Dependencies and build procedure¶

Download the source from https://github.com/joschu/rapprentice

Dependencies:

- pcl >= 1.6

- trajopt. devel branch. Build with the option BUILD_CLOUDPROC enabled.

- python modules: scipy, h5py, networkx, cv2 (OpenCV)

Build procedure:

- Build the fastrapp subdirectory using the usual cmake procedure. This includes a boost python module with a few functions, and a program for recording RGB+Depth video (from Primesense cameras). Let’s assume now that your build directory is fastrapp_build_dir.

- Add the rapprentice root directory and fastrapp_build_dir/lib to your PYTHONPATH.

- Add fastrapp_build_dir/bin to your $PATH.

Training¶

Overview¶

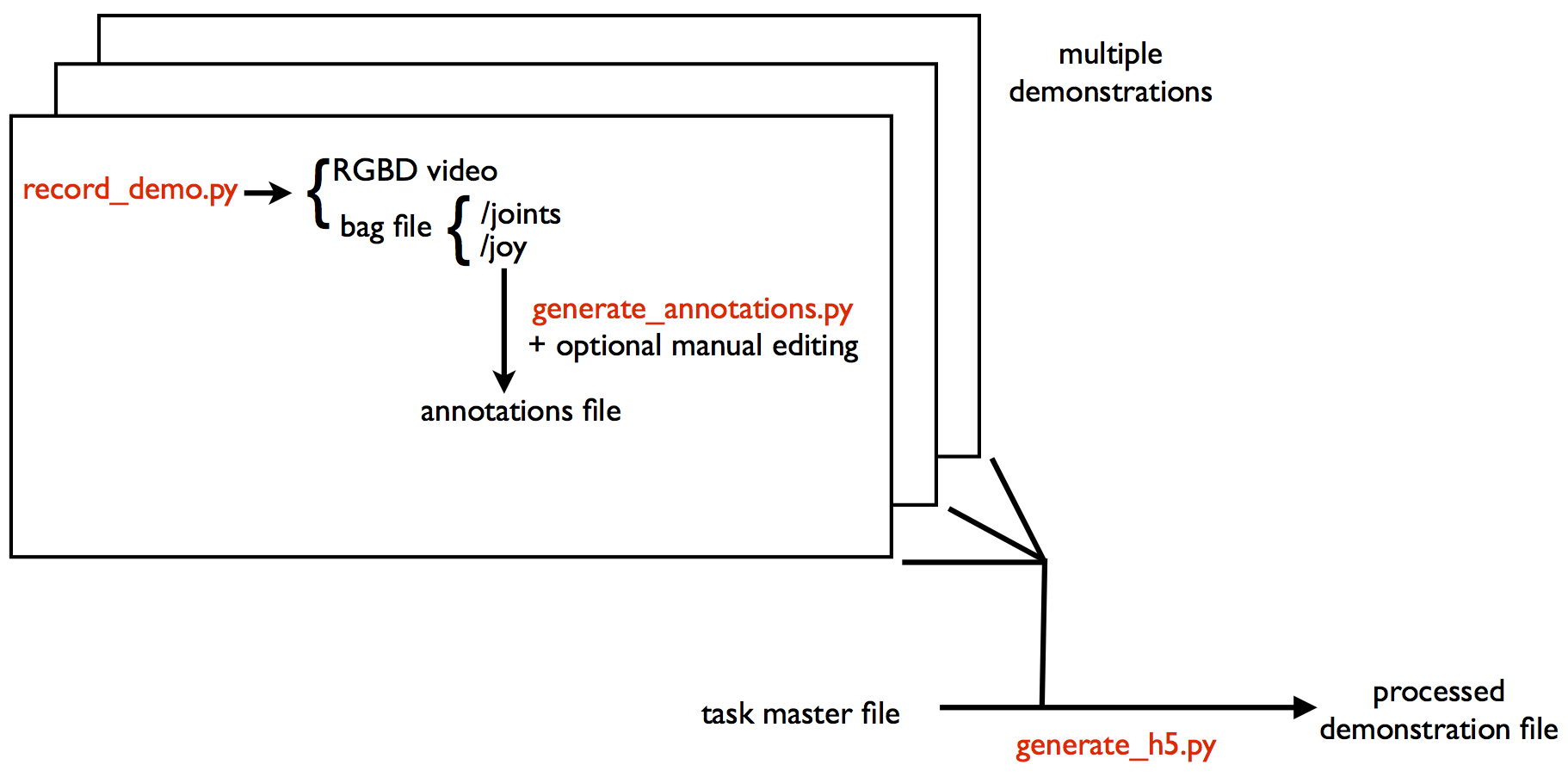

The training pipeline is illustrated below.

File formats:

- RGBD video: a directory with a series of png files (depth images, measured in millimeters) and jpg files (rgb images) and another file with the ROS timestamps.

- annotations file: yaml

- master task file: yaml

- processed demonstration file: hdf5

See the sampledata directory for examples of these formats.

Processing training data¶

You’ll presumably collect multiple runs of the whole task. Then you run a script to generate an hdf5 file that aggregates all these demonstrations, which are broken into segments.

To see an example of how to run the data processing scripts, see the script example_pipeline/overhand.py, which processes an example dataset, which contains demonstrations of tying an overhand knot in rope. To run the script, you’ll need to download the sample data with scripts/download_sampledata.py.

Execution¶

./do_task.py h5file

You can run this program in various simulation configurations that let you test your algorithm without using the robot.

Tips for debugging execution¶

- First make sure plots are enabled for the registration algorithm so you can see the demonstration point cloud (or landmarks) being warped to match the current point cloud. Check that the transformation looks good and the transformation is sending the points to the right place.

- Next, enable plotting for the trajectory optimization algorithm. Look at the purple lines, which indicate the position error. Make sure the target positions and orientations (indicated by axes) are correct.

- Look at the output of the trajectory optimization algorithm, which might tell you if something funny is going on.

Extras¶

Various other scripts are included in the scripts directory:

- view_kinect.py: view the live rgb+depth images from your Primesense camera.

- command_pr2.py: for conveniently ordering the pr2 around, run ipython -i command_pr2.py. Then you can control the pr2 with ipython by typing commands like pr2.rarm.goto_posure(`side`) or pr2.head.set_pan_tilt(0,1).

- animate_demo.py animates a demonstration.

Miscellaneous notes¶

PR2.py is set up so you can send commands to multiple bodyparts simultaneously. So most of the commands, like goto_joint_positions are non-blocking. If you want to wait until all commands are done, do pr2.join_all().

Example training and execution pipeline (brett)¶

Preparation¶

- Make sure the Kinect is plugged in. It must be plugged into a USB 2.0 port for some unknown reason. Suggest plugging it into masterlab, and using masterlab for the rest of the steps (optially via ssh at [sibi@]dhcp-223-149.banatao.berkeley.edu).

- Be in brett mode. (Run brett)

- Start robot. Use teleop to move it into place and rotate head to aim kinect. teleop_kb_general may be easier than joystick if joystick commands have been forgotten.

- Run scripts/view_kinect.py to view kinect output. Make sure everything is visible as expected. Turn on lights if working late at night.

- Edit rapprentice/cloud_proc_funcs.py and add a color filter for the task, or choose one that has already been written. Follow example code there and see OpenCV documentation for help. Turn on DEBUG_PLOTS to debug each filter. The color filter function will be called during processing.

- Run sudo ntpdate c1 to sync clock with the robot (replace c1 with robot computer name). Unsync’d clocks causes jerking motions from the robot.

Recording¶

Start teleop and mannequin. Joystick (default) teleop is recommended.

- Check joystick gripper controls.

- Left/Right on d-pad for left gripper, Square/Circle for right gripper.

- Turn on joystick with power button in the middle.

- Charge with dedicated charger if out of batteries. It will charge very slowly if plugged into USB port instead of charger.

Move the robot’s arms to check that mannequin is working. It should move but not too easily. Restart mannequin if needed. It should always be the last thing to be started.

Make a directory somewhere to store the demos.

- Run scripts/record_demo.py NAME PATH_TO_MASTER_FILE.

- The name argument is the name of the demonstration to save under.

- The master file is a yaml file to save metadata in. Since no master file exists, put a path to a non-existant yaml file (name.yaml usually) as the argument and one will be created.

- When adding demonstrations to existing demonstrations, the master file argument will be used.

Once recording begins, mark the start of each segment with a look and a start. The transformation will be based on the state at time of look.

Manually move the robot’s arms as desired, and use joystick to open/close grippers. When finished with a segment, press stop. Repeart look, start, stop cycle for each segment or configuration. Try to use robot friendly motions.

Ctrl-c to interrupt script when finished. Save the file. If one wishes to start over and discard the file, tell it to not save the file, and it may save the files anyway, in which case manually delete them from the folder.

Processing¶

If multiple demonstrations have been recorded, edit/check the yaml master file to include paths to all the demonstrations to have them be merged into one h5 file.

- Run scripts/generate_h5.py PATH_TO_MASTER_FILE --cloud_proc_func=COLOR_FILTER_NAME

- master file is same as the one from before

- color filter is function name defined in cloud_proc_funcs.py

- debug color filter at this stage by setting DEBUG_PLOTS to true and viewing filtered output

- ignore warnings about unused joint states

If error about stamps.txt being not found, the script may not have the correct path to images folder. If images folder is named name0#1, rename it to name0.

If error about rgb being None, edit the script and change the way it constructs rgb names to match the image names. If files are named 00025.jpg, use %05d.jpg for example.

View h5 file with python h5py if needed. Optionally merge it with another h5 file with other segments with h5py (.copy()). See h5py docs for more info. This method of merging multiple demonstrations is used if the first method fails.

Execution¶

Make sure mannequin and teleop are stopped.

Check that the Kinect is still aimed properly.

- Run scripts/do_task.py PATH_TO_H5 --execution=1 --cloud_proc_func=COLOR_FILTER_NAME

- Use h5 file and color filter from previous section

- Use --animation=1 to debug transformation and point clouds

- Use --select_manual to manually select segment instead of using cost function

- Debug and tune color filter here again if necessary